DoE2Vec

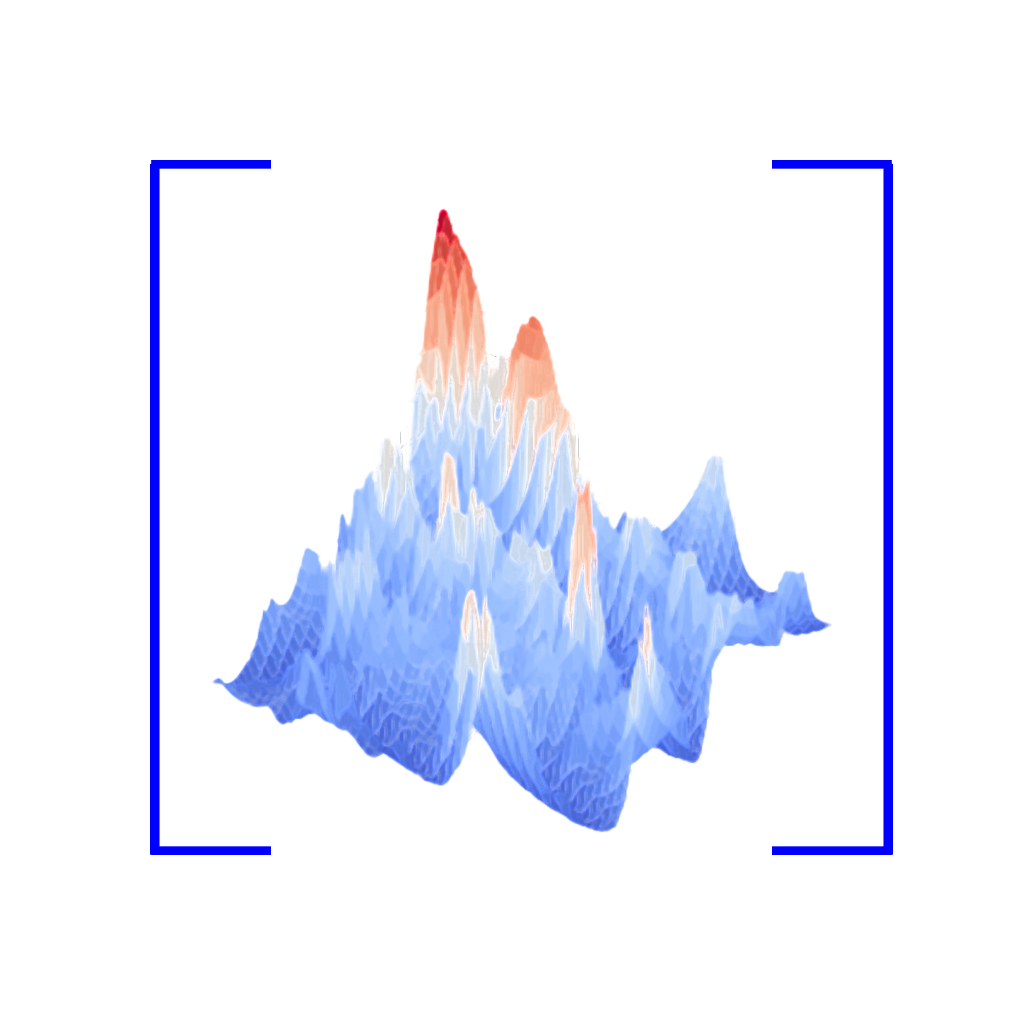

DoE2Vec is a self-supervised approach to learn exploratory landscape analysis features from design of experiments. The model can be used for downstream meta-learning tasks such as learninig which optimizer works best on a given optimization landscape.

Cite this software

Description

DoE2Vec is a self-supervised approach to learn exploratory landscape analysis features from design of experiments. The model can be used for downstream meta-learning tasks such as learninig which optimizer works best on a given optimization landscape. Or to classify optimization landscapes in function groups.

The approach uses randomly generated functions and can also be used to find a "cheap" reference function given a DOE. The model uses Sobol sequences as the default sampling method. A custom sampling method can also be used. Both the samples and the landscape should be scaled between 0 and 1.

Install package via pip

`pip install doe2vec`

Afterwards you can use the package via:

from doe2vec import doe_model

Load a model from the HuggingFace Hub

Available models can be viewed here: https://huggingface.co/BasStein

A model name is build up like BasStein/doe2vec-d2-m8-ls16-VAE-kl0.001

Where d is the number of dimensions, 8 the number (2^8) of samples, 16 the latent size, VAE the model type (variational autoencoder) and 0.001 the KL loss weight.

Example code of loading a huggingface model

obj = doe_model(

2,

8,

n= 50000,

latent_dim=16,

kl_weight=0.001,

use_mlflow=False,

model_type="VAE"

)

obj.load_from_huggingface()

#test the model

obj.plot_label_clusters_bbob()

How to Setup your Environment for Development

python3.8 -m venv envsource ./env/bin/activatepip install -r requirements.txt

Generate the Data Set

To generate the artificial function dataset for a given dimensionality and sample size run the following code

from doe2vec inport doe_model

obj = doe_model(d, m, n=50000, latent_dim=latent_dim)

if not obj.load():

obj.generateData()

obj.compile()

obj.fit(100)

obj.save()

Where d is the number of dimensions, m the number of samples (2^m) per DOE, n the number of functions generated and latent_dim the size of the output encoding vector.

Once a data set and encoder has been trained it can be loaded with the load() function.

- Open Access

Participating organisations

Reference papers

Mentions

- 1.Author(s): Behzad Moradi, Mario Andrés Muñoz, Michael KirleyPublished in 202610.1007/978-3-032-23604-3_21

- 2.Author(s): Ana Nikolikj, Gjorgjina Cenikj, Risto Trajanov, Ana Kostovska, Tome EftimovPublished in Natural Computing Series, Explainable AI for Evolutionary Computation by Springer Nature Singapore in 2025, page: 41-6510.1007/978-981-96-2540-6_3

- 3.Author(s): Moritz Seiler, Urban Škvorc, Carola Doerr, Heike TrautmannPublished in Lecture Notes in Computer Science, Learning and Intelligent Optimization by Springer Nature Switzerland in 2025, page: 361-37610.1007/978-3-031-75623-8_29

- 4.Author(s): Niki van Stein, Qi Huang, Elena RaponiPublished in Natural Computing Series, Explainable AI for Evolutionary Computation by Springer Nature Singapore in 2025, page: 175-19510.1007/978-981-96-2540-6_8

- 5.Author(s): Moritz Seiler, Urban Škvorc, Gjorgjina Cenikj, Carola Doerr, Heike TrautmannPublished in Lecture Notes in Computer Science, Parallel Problem Solving from Nature – PPSN XVIII by Springer Nature Switzerland in 2024, page: 137-15310.1007/978-3-031-70068-2_9

- 6.Author(s): Fu Xing Long, Moritz Frenzel, Peter Krause, Markus Gitterle, Thomas Bäck, Niki van SteinPublished in Lecture Notes in Computer Science, Parallel Problem Solving from Nature – PPSN XVIII by Springer Nature Switzerland in 2024, page: 87-10410.1007/978-3-031-70068-2_6

- 1.Author(s): Qingbin Guo, Handing WangPublished in Proceedings of the Genetic and Evolutionary Computation Conference Companion by ACM in 2025, page: 683-68610.1145/3712255.3726557

- 2.Author(s): Xingyu Wu, Yinglan Feng, Jibin WuPublished in 2025 International Conference on Machine Intelligence and Nature-Inspired Computing (MIND) by IEEE in 2025, page: 1-710.1109/mind67540.2025.11351666

- 3.Author(s): Angie P. Rodríguez, Miguel A. MelgarejoPublished in 2024 Sixth International Conference on Intelligent Computing in Data Sciences (ICDS) by IEEE in 2024, page: 1-810.1109/icds62089.2024.10756341

- 4.Author(s): Gašper Petelin, Gjorgjina CenikjPublished in 2023 IEEE Symposium Series on Computational Intelligence (SSCI) by IEEE in 2023, page: 341-34610.1109/ssci52147.2023.10371880

- 1.Author(s): Xu Yang, Rui Wang, Kaiwen Li, Wenhua Li, Tao ZhangPublished in Swarm and Evolutionary Computation by Elsevier BV in 2025, page: 10213610.1016/j.swevo.2025.102136

- 2.Author(s): Qingbin Guo, Handing Wang, Ye TianPublished in Swarm and Evolutionary Computation by Elsevier BV in 2025, page: 10207110.1016/j.swevo.2025.102071

- 3.Author(s): Moritz Vinzent Seiler, Pascal Kerschke, Heike TrautmannPublished in Evolutionary Computation by MIT Press in 2025, page: 513-54010.1162/evco_a_00367

- 4.Author(s): Alexande V. SmirnovPublished in Russian Technological Journal by RTU MIREA in 2025, page: 121-13110.32362/2500-316x-2025-13-2-121-131

- 5.Author(s): Niki van Stein, Diederick Vermetten, Anna V. Kononova, Thomas BäckPublished in ACM Transactions on Evolutionary Learning and Optimization by Association for Computing Machinery (ACM) in 2025, page: 1-3010.1145/3716638

- 6.Author(s): Fu Xing Long, Niki van Stein, Moritz Frenzel, Peter Krause, Markus Gitterle, Thomas BäckPublished in Structural and Multidisciplinary Optimization by Springer Science and Business Media LLC in 202510.1007/s00158-025-03989-x

- 7.Author(s): Gašper Petelin, Gjorgjina Cenikj, Tome EftimovPublished in Swarm and Evolutionary Computation by Elsevier BV in 2024, page: 10144810.1016/j.swevo.2023.101448

- 8.Author(s): Anna Mazur, Krystyna KurowskaPublished in Resources by MDPI AG in 2024, page: 17310.3390/resources13120173

- 9.Author(s): Peter Korošec, Tome EftimovPublished in Information Sciences by Elsevier BV in 2024, page: 12113410.1016/j.ins.2024.121134

- 10.Author(s): Fu Xing Long, Bas van Stein, Moritz Frenzel, Peter Krause, Markus Gitterle, Thomas BäckPublished in ACM Transactions on Evolutionary Learning and Optimization by Association for Computing Machinery (ACM) in 2024, page: 1-2610.1145/3646554